Artificial neuron: Difference between revisions

imported>Felipe Ortega Gutiérrez No edit summary |

imported>Felipe Ortega Gutiérrez No edit summary |

||

| Line 1: | Line 1: | ||

'''Artificial neurons''' are processing units based on the biological neural model. The first artificial neuron model was created by McCullough and Pitts, and then newer and more complex models have appeared. Since the connectivity in the biological neurons is higher, artificial neurons must be considered as only an approximation to the biological model. | '''Artificial neurons''' are processing units based on the biological neural model. The first artificial neuron model was created by McCullough and Pitts, and then newer and more complex models have appeared. Since the connectivity in the biological neurons is higher, artificial neurons must be considered as only an approximation to the biological model. | ||

Artifical neurons can be organized and connected in order to create [[Artificial Neural Network|artificial neural networks]], which | Artifical neurons can be organized and connected in order to create [[Artificial Neural Network|artificial neural networks]], which process the data carried through the neural connections in different layers. Learning algorithms can also be applied to artificial neural networks in order to modify their behavior. | ||

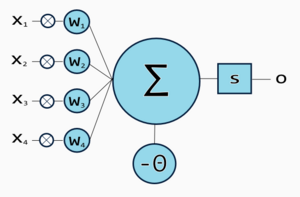

[[Image:artificialneuron.png|thumb|300px|McCullough-Pitts neuron]] | [[Image:artificialneuron.png|thumb|300px|McCullough-Pitts neuron with 4 inputs.]] | ||

==Behavior== | ==Behavior== | ||

A neuron may have multiple inputs, each input has an assigned value called ''weight'', which represents the strength of the connection between the source and destination neuron. The input signal value is multiplied by the ''weight'' <math>w \in \left[0 \dots 1\right]</math>. | |||

The | The sum of all input values multiplied by their respective weights is called ''activation'', or ''weighted-sum''. | ||

<math>a = \sum_{i=0}^n w_i x_i</math> | |||

After the activation is produced, a function modifies it, producing an output. That function is often called ''transfer function'' and its purpose is to filter the activation. | |||

<math>y = \varphi \left( \sum_{i=0}^n w_i x_i \right)</math> | |||

==Transfer Functions== | ==Transfer Functions== | ||

Revision as of 00:12, 5 July 2007

Artificial neurons are processing units based on the biological neural model. The first artificial neuron model was created by McCullough and Pitts, and then newer and more complex models have appeared. Since the connectivity in the biological neurons is higher, artificial neurons must be considered as only an approximation to the biological model.

Artifical neurons can be organized and connected in order to create artificial neural networks, which process the data carried through the neural connections in different layers. Learning algorithms can also be applied to artificial neural networks in order to modify their behavior.

Behavior

A neuron may have multiple inputs, each input has an assigned value called weight, which represents the strength of the connection between the source and destination neuron. The input signal value is multiplied by the weight .

The sum of all input values multiplied by their respective weights is called activation, or weighted-sum.

After the activation is produced, a function modifies it, producing an output. That function is often called transfer function and its purpose is to filter the activation.

Transfer Functions

Transfer functions is the name given for the functions which apply the threshold to the activation value. This functions can be discrete or continuous, and they also can be defined as step functions.

Impulse pass

Depending on the network model, neurons can pass their impulses to their terminals, or backwards. The "backward pass" can be observed in learning algorithms like "Backpropagation".

Analogy to Biological Neurons

In biological neurons there is a similar behavior. Inputs are electrical pulses transmitted to the synapses (terminals in the dendrites). Electrical pulses produce a release of neurotransmitters which may alter the dendritic membrane potential (Post Synaptic Potential). The Post Synaptic Potential travels over the axon, reaching another neuron, which will sum all the Post Synaptic Potentials received, and fire an output if the total sum of the Post Synaptic Potentials in the axon hillock received exceeds a threshold.

![{\displaystyle w\in \left[0\dots 1\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/620cd6ccc592bb3e91dca489a0fa76263065fc96)