Byte

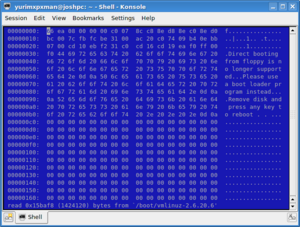

In computer science, a byte is a unit of data consisting of eight bits. When grouped together, bytes can contain the information to form a document, such as photograph or a book. All data represented on a computer are composed of bytes, from e-mails and pictures, to programs and data stored on a hard drive. Although it may appear to be a simple concept at first glance, there is much information that goes to a deeper level than the byte.

Technical definition

For example, in electronics, information is determined by the toggle of two states, usually referred to as 'on' and 'off'. To represent this state, computer scientists use the values of 0 (off) and 1 (on); we refer to this value as a bit.

Each byte is made of eight bits, and can represent any number from 0 to 255. We obtain this number of possible values, which is 256 when including the 0, by raising the possible values of a bit (two) to the power of the length of a byte (eight); thus, 28 = 256 possible values in a byte.

Bytes can be used to represent a countless array of data types, from characters in a string of text, to the assembled and linked machine code of a binary executable file. Every file, sector of system memory, and network stream is composed of bytes.

Endianness

Of course, since data almost always consists of more than one byte, these strings of numbers must be arranged in a certain fashion in order for a device to read it correctly. In computer science, we refer to this as endianness. Just as some human languages are written from left to right, such as English, while others are written from right to left, such as Greek, bytes are not always arranged in the same fashion.

The method of which the most significant byte is first, or stored in the lowest memory sector, rather, is called the 'Big Endian'. Its antonym is the 'Little Endian', in which the most significant byte is stored in the highest memory sector. Unfortunately, neither order of data is standard. For this reason, network communications must specify which method they are using before they transmit information.

Word origin

Although the origin of the word 'byte' is unknown, it is believed to have been coined by Dr. Werner Buchholz of IBM in 1964. It is a play on the word 'bit', and was originally used to refer to a the number of bits used to represent a character.[1] This number is usually eight, but in some cases (especially in times past), it can be any number between 2 and as many as 128 bits. Thus, the word 'byte' is actually an ambiguous term. For this reason, an eight bit byte is sometimes referred to as an 'octet'.[2]

Sub-units

While basic, byte is not the most commonly used unit of data. Because files are normally many thousands or even billions of times larger than a byte, other terms are used to increase readability. Metric prefixes are added to the word byte, such as kilo for one thousand bytes (kilobyte), mega for one million (megabyte), giga for one billion (gigabyte), and even tera, which is one trillion (terabyte). One thousand megabytes composes a terabyte, and even the largest consumer hard drives today are only three-fourths a terabyte (750 'gigs' or gigabytes). The rapid pace of technology may make the terabyte a common apperance in the future, however.

Conflicting definitions

Traditionally, the computer world has used a value of 1024 instead of 1000 when referring to a kilobyte. The reason for this is that the programmers needed a number compatible with the base of 2, and 1024 is equal to 2 to the 10th power. This, however, is now non-standard; it has recently been replaced with the term 'kibibyte', abbreviated as KiB; this standard is known as the 'binary prefix'.

While the difference between 1000 and 1024 may seem trivial, one must note that as the size of a disk increases, so does the margin of error. The difference between 1TB and 1TiB, for instance, is approximately 1.1%. As hard drives become larger, the need for a distinction between these two prefixes will grow. This has been a problem for hard disk drive manufacturers in particular. For example, one well known disk manufacturer, Western Digital, has recently been taken to court for their use of the base of 10 when labeling the capacity of their drives.[3]

Table of prefixes

| SI prefixes (abbreviation) | Value | Binary prefixes (abbreviation) | Value | Margin of error |

|---|---|---|---|---|

| kilobyte (KB) | 103 | kibibyte (KiB) | 210 | 1.024% |

| megabyte (MB) | 106 | mebibyte (MiB) | 220 | 1.048576% |

| gigabyte (GB) | 109 | gibibyte (GiB) | 230 | 1.073741824% |

| terabyte (TB) | 1012 | tebibyte (TiB) | 240 | 1.099511627776% |

| petabyte (PB) | 1015 | pebibyte (PiB) | 250 | 1.125899906842624% |

| exabyte (EB) | 1018 | exbibyte (EiB) | 260 | 1.152921504606846976% |

| zettabyte (ZB) | 1021 | zebibyte (ZiB) | 270 | 1.18059162071741130342% |

| yottabyte (YB) | 1024 | yobibyte (YiB) | 280 | 1.20892581961462917471% |

References

- ↑ Dave Wilton (2006-04-8). Wordorigins.org; bit/byte.

- ↑ Bob Bemer (Accessed April 12th, 2007). Origins of the Term "BYTE".

- ↑ Nate Mook (2006-06-28). Western Digital Settles Capacity Suit.

Related topics

Subtopics

- Half of a byte (four bits) is referred to as a nibble.

- The word is a standard number of bytes that memory is addressed with. Memory can only be addressed by multiples of the size of a word, and the size of a word is dependent on the architecture. For example: a 16-bit processor has words consisting of two bytes (8 x 2 = 16), a 32-bit processor has words that consist of four bytes (4 x 8 = 32), etc.